Once you follow the practices that I preach about defining and maintaining your whole platform with code, you’re simplifying and speeding up the recovery process. It’s a huge gain for an organization that prepares for the worst. It’s part of the “Design for Failure” principle that has been a part of best practices within the platform architects’ community.

But that’s not enough. That’s only the beginning. Sure, keeping everything in code, using GitOps tools and practices, allows organizations to recover or even migrate their platforms more easily. How about data? Can it be protected in the same way? It turns out it’s a bit more complex. Data is the part we should take care of the most. Why?

Imagine you had a major outage. What would have a bigger impact on your business - lack of or incomplete configuration for the platform components or data that is corrupted or missing? You already know the answer, right? That’s why we need to protect the data with the utmost attention, and here I’m describing a simple way to ensure your data is recoverable.

Disaster Recovery Testing (DiRT)

Google’s approach to maintaining their huge platform for all sorts of services is called Site Reliability Engineering (SRE). One of the practices they have come up with is Disaster Recovery Testing(DiRT).

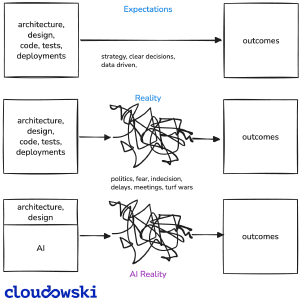

The rules are simple but hard to implement for many organizations. The purpose of this practice is to artificially create outages in your production environment to test its resilience and learn what needs to be improved. Sounds scary and indeed it is.

Few companies are prepared to mitigate the risk of an outage that is caused by their own employees. It also involves a lot of planning, coordination, courage from the leadership team and learning followed by the implementation of the fixes.

That’s why I haven’t seen many companies that do similar tests regularly. They often limit it to non-production environments and struggle with the fixes afterwards. There are myriad reasons why it doesn’t work for them. One is obvious: not every company is Google with vast resources, experience and well-defined risk management.

However, not all hope is lost. There’s a way for all those who want to design for failure without spending too much time or risking outages in the production environment.

Continuous Data Recovery Testing (CoDaRT)

Why not follow a similar code-based and automated approach to test the recoverability of the data? That’s the premise of Continuous Data Recovery Testing (CoDaRT). It’s an automated method to ensure that backups are recoverable and the data is valid.

Ensure your backup procedures are in place (and work)

That’s obvious and I believe most organizations do have backup procedures. I know that it’s a part of many compliance and internal audits. Sadly, in many cases they are focused on finding the proof that they are performed, not if they are valid.Create an automated job that restores data from backups

The first step of the test is to create a recurring job that would find the latest backup and restore it. The restoration process depends on the type of the data and it may require a lot of steps. For a database that would require spinning up a new instance or reusing an existing one in order to load the database dump.

This step is essential and is so much easier when the platform is already defined as code, because this process can depend on the components that can be created from code (e.g. a new database instance, Kubernetes cluster, VM, etc.). A huge benefit of this is that the process tests the data backup and also the code defining the platform components required by this operation.Perform tests

After the data is restored a test must run in order to verify and validate it. This test can be a quick and simple smoke test just to check for the existence of particular records or it can be a sophisticated suite of tests. It all depends on the type of data and can also be combined with tests validating the infrastructure parts.

When tests succeed a proper event needs to be sent to the monitoring system. If any errors occur then either no event is sent or a special event with an error is created. I’d recommend only sending when the tests have passed. Read below to find out why.Act on alerts

The final part is crucial and depends heavily on the organization’s culture. Setting up the previous parts may be time-consuming at first, but when it starts to work according to the schedule, the non-technical part comes into play and becomes the core of the CoDaRT.

So why send events on success only? Because it’s easier and behaves like a heartbeat signal. If no event has been published then an alert should be created. Then it needs to be verified and fixed if needed. An AI agent can do this now.

Regardless of the option you choose, it’s a learning opportunity and a way the platform becomes more resilient over time. Especially if you extend this type of testing to more components defined with code.

This simplified model can be used for any type of data that is used to deliver business value. Because it’s an automated process, it’s easier to use daily unlike the DiRT approach. Let’s compare these two methods to see the differences more clearly.

DiRT vs. CoDaRT

Here’s how DiRT and the aforementioned CoDaRT compare.

| DiRT | CoDaRT | |

|---|---|---|

| How often | Every few months | At least daily |

| Automated | No | Yes |

| Scope | All components | Data (optionally a subset of components) |

| Effort | Very High | Low |

| Impact | Very High | Medium / High |

Continuous testing is far less complicated and thus should be implemented in every modern platform. Protecting data is not about backups - it’s about evidence-based automated tests.

Example: CoDaRT for Postgres database

Let’s see how to apply this practice. I will describe an example process that tests the recovery of a Postgres database. I believe it’s a very common scenario for many organizations.

Environment

This scenario assumes that a database is running on a Postgres cluster maintained by the CloudNativePG operator on a Kubernetes cluster. It’s a battle-tested operator that provides features which simplify the management of the databases, including backup and recovery mechanisms.

Here are other vital ingredients used by the solution described below:

- Scheduled job - CronJob definition with custom Role that allows a pod to use Kubernetes API for querying for backups and creating temporary Postgres database using Custom Resources

- Testing script - A script written in any language (e.g. Python) that includes the logic for testing. It is used as an execution entrypoint defined for a Pod created by a CronJob.

Backup Recovery Test Process

sequenceDiagram

participant Pod

participant K8sAPI as Kubernetes API

participant CNPG as CloudNativePG Operator

participant Secret as K8s Secret

participant DB as PostgreSQL DB

Pod->>Pod: Install dependencies

Pod->>K8sAPI: List Backups

K8sAPI->>CNPG: Query backup objects

CNPG-->>K8sAPI: Return backups

K8sAPI-->>Pod: Return backups

Pod->>Pod: Select newest backup

Pod->>K8sAPI: Create Cluster resource<br/>with bootstrap from backup

K8sAPI->>CNPG: Cluster creation request

CNPG->>CNPG: Validate backup recovery

CNPG->>DB: Initialize cluster from backup

activate DB

Pod->>K8sAPI: Poll cluster status

loop Wait for readiness

K8sAPI->>CNPG: Get cluster status

CNPG-->>K8sAPI: Status

K8sAPI-->>Pod: Status update

end

Pod->>Secret: Read credentials

Secret-->>Pod: DB credentials

Pod->>DB: Connect and verify

DB-->>Pod: Query result

alt Data verified

Pod->>Pod: Test PASS

else No data

Pod->>Pod: Test FAIL

end

deactivate DB

Pod->>K8sAPI: Delete Cluster resource

K8sAPI->>CNPG: Cleanup request

CNPG->>DB: Teardown cluster

Execution Flow:

- Job Creation - CronJob creates a Job with retry limits and history tracking

- Pod Execution - Pod installs dependencies and runs the verification script.

- Backup Retrieval - Script finds the newest backup from the data stored in Kubernetes API. It is provided by the operator which makes it easy to query. At this point the process can fail if there’s no recent backup which signals a serious problem.

- Test Cluster Creation - Creates a temporary PostgreSQL cluster from the backup. The test script uses CloudNativePG Custom Resource.

- Cluster Readiness - Polls cluster status with 30-second intervals and a fixed timeout (e.g. 30min).

- Credentials - Fetches database credentials from Kubernetes secret created by the operator.

- Connection - Establishes database connection with automatic retries using the fetched credentials.

- Data Verification - Executes query to verify the data.

- Cleanup - Deletes the test cluster.

- Result - Reports test success or failure.

The result is visible in a status field for an instance of a CronJob. This is read by a monitoring system to trigger an alarm when the verification fails or if there is no recent CronJob at all.

Conclusion

Most organizations have backups. Far fewer have evidence that those backups actually work. That gap is where disasters happen — not during the outage, but months earlier when someone assumed the backup was fine because the job showed green.

CoDaRT closes that gap. It’s not complicated. A CronJob, a test script, and a heartbeat alert ar enough to go from “we have backups” to “we know our data is recoverable.” The heartbeat pattern is deliberately simple — silence is the alarm. No special tooling required. Don’t wait for a real disaster to find out your backups were broken for six months. Run the test today, automate it tomorrow, and never think about it again.

Comments